Sora is a groundbreaking text-to-video AI model developed by OpenAI. While initially launched in early 2024, its current state-of-the-art version, Sora 2, represents a fundamental shift from a video generator to a sophisticated “World Simulator”. This change allows it to produce high-quality videos with a deep understanding of real-world physics and cause-and-effect relationships.

Here is a summary of its key features and details.

| Feature | Description |

|---|---|

| Developer | OpenAI |

| Core Technology | Diffusion Transformer (DiT) & “Space-time Patches” |

| Key Model Version | Sora 2 (launched in late 2025) |

| Video Generation | Text/Image/Video-to-Video, up to 60 seconds for complex scenes. Pro users can generate up to 25 seconds via the app/website. |

| Native Audio Generation | Yes, with realistic sound effects synchronized to the video. |

| Accessibility | Available via Sora App (iOS) and web. Launched in Taiwan, Thailand, and Vietnam in October 2025 and open to download without waitlist. |

| Business Model | Freemium. Paid subscription (Pro) for extended features. |

🎬 Key Features and Capabilities

World Simulation, Not Just Video Generation: The key advancement of Sora 2 lies in its ability to model the physics of a scene. Instead of stitching images together, it understands and simulates how objects interact over time, maintaining consistency (e.g., a character’s clothing stays the same throughout a video) and allowing for physically plausible outcomes (e.g., a basketball that can realistically miss a shot).

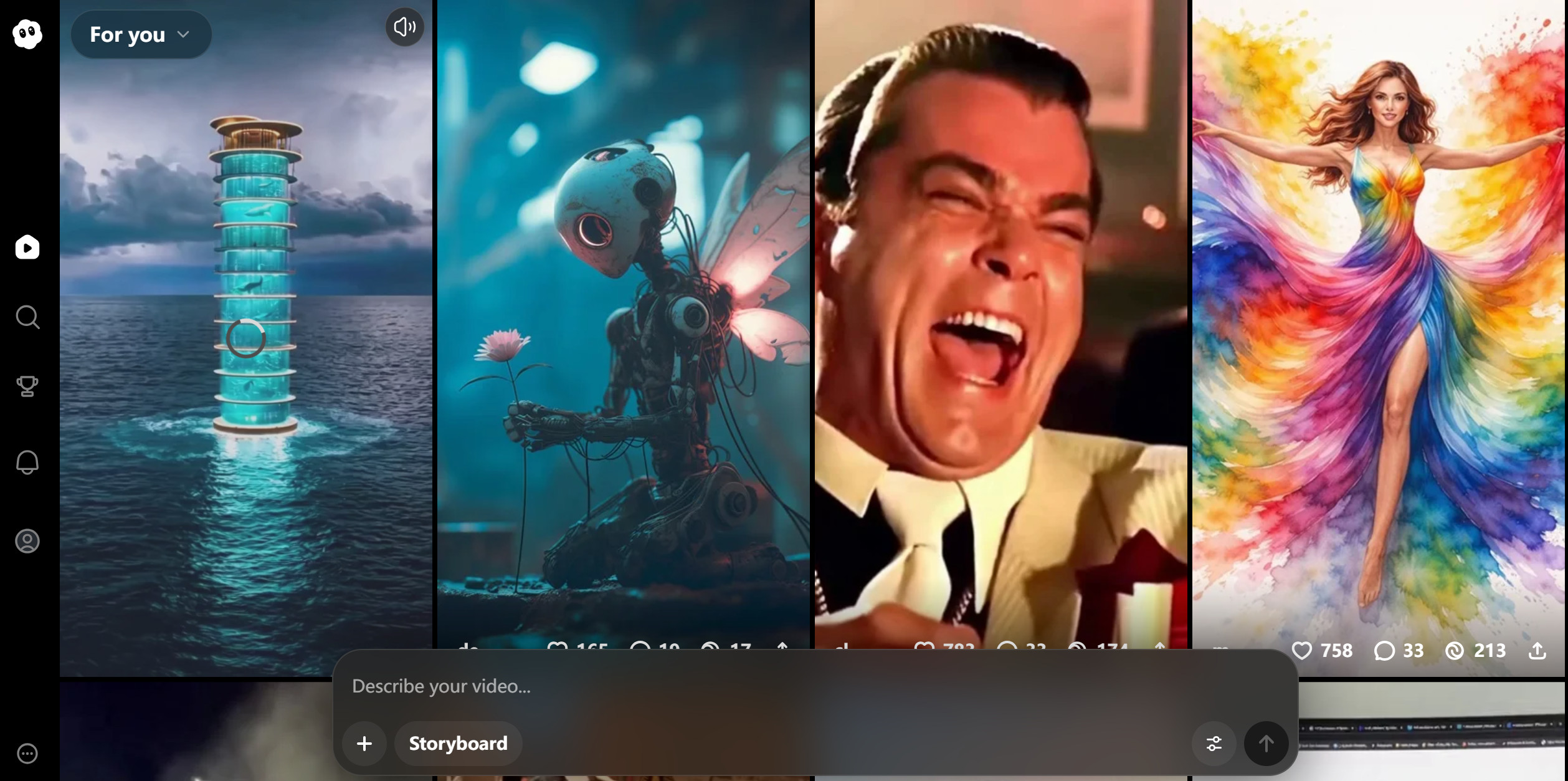

Cameo – A Social and Creative Hub: This standout feature allows users to create a digital version of themselves (or a friend with permission) and insert it into any AI-generated scene. This transforms Sora from a tool into a “generative social network”, sparking collaborative and remixed content creation.

Professional-Grade Quality: Independent tests show that Sora excels in physics understanding, complex scene handling, and generating long, coherent videos. It can create highly detailed scenes with accurate motion, making it suitable for professional applications.

Advanced Creative Controls: Pro users have access to a storyboard feature on the web, allowing for better planning of multi-scene video projects.

💼 Applications and Use Cases

Sora 2 is revolutionizing content creation across industries by drastically reducing time and cost.

Marketing & Advertising: Creates high-quality product ads and promotional videos. One analysis showed costs for a car advertisement could drop from a one-month production cycle to just 20 minutes.

Content Creation: Enables individual creators (e.g., travel or food bloggers) to produce content that would be impossible or too expensive to film.

Education & Training: Quickly generates explanatory or historical videos, with production cycles shrinking from months to minutes.

Social & Entertainment: The Cameo feature fosters a new form of social interaction and entertainment, with users creating personalized, shareable videos.

⚖️ Safety, Copyright, and Regional Considerations

Safety & Content Moderation: OpenAI has implemented multiple safety layers, including strict content filters for the Cameo feature to prevent misuse. All generated videos carry visible and invisible watermarks (C2PA metadata) for traceability.

Copyright: The platform has moved to an “opt-in” policy for copyrighted characters, meaning they will not appear unless explicitly authorized by the rights holder.

Global Availability (GEO): The Sora App is expanding globally. As of October 2025, it launched in key Asian markets like Taiwan, Thailand, and Vietnam without an invitation requirement, indicating a targeted expansion strategy.

In summary, Sora 2 is more than an AI video tool; it’s a platform for simulating realities and social creation. Its strength lies in its superior physics simulation, innovative social features like Cameo, and its potential to democratize high-quality video production globally.

data statistics

Relevant Navigation

Jimeng(即梦AI)

Doubao(豆包)

Kling AI(可灵)

DeepSeek

MiniMax(海螺AI)

Nano Banana